Software will save us

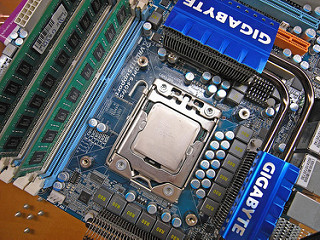

Summary: Some old insights are still true. Hardware has almost 2000 times more transistors in each chip comparing 1995 to 2015, but steadily increasing software demands of hardware have given us slower and slower systems. A basic task on a 1995 machine completes only slightly faster on a modern machine. Our computers are not 2000 times better or make us 2000 times more productive.

I was reading a press release from Intel on the feasibility of chip advancements after the next gen 10nm chips. Ideas do exist, but nothing will gain the power and speed that the brute force method of “make it smaller with more transistors” approach has for the last 60 years.

Got me thinking about the end game. We have been privileged to sit back and enjoy ever increasing gains from improvement to chip hardware. However, the nature of exponential growth means we have to hit a limit at some point. That point is fast approaching. Could be 2017 or 2020, doesn’t matter, we are already there.

We hit that limit around 2006. That was the point where chip manufacturers could no longer double the number of transistors per chip every 2 years. Instead, Intel and others began making chips with 2+ cores. The guts of two chips inside of one chip casing. You could consider this cheating. These new multi core chips needed special software to run in parallel. Software does not run faster simply by adding more cores. The multicore overhead limits gains like this. For example, the jump from 2 cores to 4 cores was 200% the number of transistors, but only 20% faster running the same multicore software.

Where do we go next after the completion of moore’s law and the end of brute force improvements to hardware? Optimizing what we already got. There are still improvements remaining to be made to hardware, but nothing that will deliver us any magnitude improvements of what we already have. Instead, software engineers are going to have to save the day.

Modern software won’t run very well on chips from 2006, but you can look at similar software available at that time to do a comparison. The scary thing? With the boom of parallel processing, software has gotten slower.

Software bloat is the real reason for computers slowing as time trenches on. Is a concept worth a read on wikipedia. I will summarize some of the causes:

1. Lazy software engineers. As hardware has been improving 100s of fold since 1990s. Software folks have been enjoying making new generations of software that are not very efficient but is hidden by the faster hardware speeds.

2. Change in software tools. Prior to the 1990s, software engineers were limited in memory and speed. By necessity, they had to work much harder to create the most efficient solution. Often this meant writing code in assembly, the machine language. Today’s software folks use high level debugging tools. Are useful sure, but are far removed from the actual code that runs on the chip.

3. Software has become huge. No single person understands every part of what has been cobbled together. How could they? The modern operating system is developed by hundreds and thousands of individual people contributing millions of pages of code.

Many futurists will talk about something called the technological singularity. The point where a computer can design a better version of itself. Rewriting better and faster versions of itself, at a incomprehensible speed. Leading to an explosion of powerful computers that humans no longer understand. Too late…

Links:

- Intel is officially slowing down the pace of CPU releases – http://www.engadget.com/2016/03/23/intel-eliminating-tick-tock-moores-law/

- The Status of Moore’s Law: It’s Complicated – http://spectrum.ieee.org/semiconductors/devices/the-status-of-moores-law-its-complicated

- Semiconductor device fabrication – https://en.wikipedia.org/wiki/Semiconductor_device_fabrication

- Transistor count – https://en.wikipedia.org/wiki/Transistor_count

- Intel Core – https://en.wikipedia.org/wiki/Intel_Core

- Moore’s law – https://en.wikipedia.org/wiki/Moore%27s_law

- Wirth’s law – https://en.wikipedia.org/wiki/Wirth%27s_law

- Amdahl’s law – https://en.wikipedia.org/wiki/Amdahl%27s_law

- Rethinking software bloat – http://www.freerepublic.com/focus/f-news/592116/posts

- Software bloat – https://en.wikipedia.org/wiki/Software_bloat